Table of contents

Every analytics vendor claims AI. Few can prove their AI is doing real analytical work. Here is what executives need to verify before committing budget to an AI-powered analytics tool.

TL;DR

- “Embedded generative AI” means AI that is woven into the analytics workflow itself, querying live data against standardized metric definitions, not a chatbot layered on top of a legacy dashboard.

- Only about 1 in 5 organizations qualify as true AI ROI leaders. The three structural failure modes, dirty data, bolted-on AI architecture, and no semantic layer, explain why the rest underperform.

- A semantic layer is the single most important architectural prerequisite for accurate AI analytics, improving NLQ (Nexthink Query Language) accuracy on complex queries from 0% to 70%.

- Executives should evaluate any solution against five non-negotiable questions that test for live data access, metric standardization, self-service for non-technical users, auditable governance, and concrete implementation timelines.

- Agentic analytics, AI that monitors, reasons, and acts without prompting, is the next trajectory.

Introduction

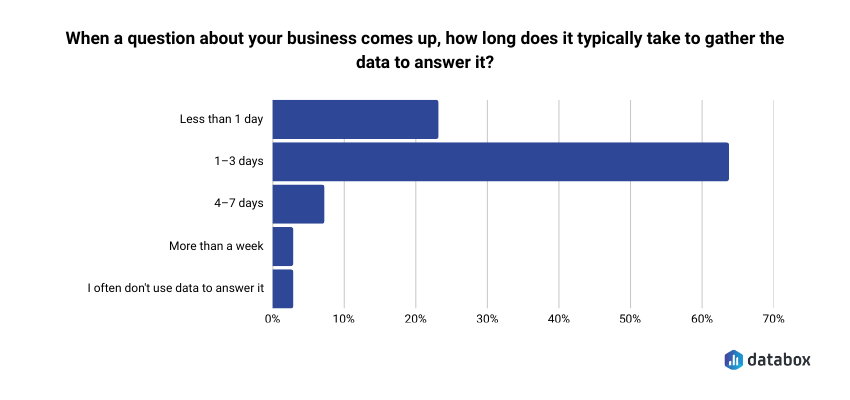

64.29% of teams say it typically takes 1–3 days to gather data to answer a business question, according to Databox research Time to Insight.

Every analytics vendor selling “embedded generative AI” in 2026 promises to close that gap. Most of them won’t, and not because the technology doesn’t work, but because most products sold under that label are chatbots layered on top of the same legacy BI architecture that produced the 1–3 day gap in the first place. New interface, same bottleneck.

Embedded generative AI is the most overclaimed feature in the analytics category right now. The label has been applied so loosely that executives face a harder problem than choosing the right vendor: they face the problem of determining which vendors are telling the truth about what their AI actually does.

Business analytics solutions with embedded generative AI, when the architecture is real, are designed to do four things at the workflow level:

- answer plain-language questions against live data in seconds,

- surface anomalies before anyone asks,

- generate narrative explanations of metric movements, and

- project what is likely to happen next.

The architectural shift collapses the gap between the question a CEO or CFO asks and the answer they receive from days to seconds. Most analytics platforms have responded to this shift by slapping an AI badge on tools that still require the same human queues, SQL dependencies, and manual interpretation they always did.

This guide is built for the decision in front of you. It explains what “embedded” actually means at the architectural level, why most organizations have not yet extracted real ROI from AI analytics, and the five questions that separate genuinely embedded AI from a dashboard with a chatbot slapped on.

The question isn’t whether your analytics platform uses AI, but whether the AI is actually doing the work or just dressing up the same old dashboard.

What “Embedded Generative AI” Actually Means (and What It Doesn’t)

“Embedded” is the operative word in business analytics solutions with embedded generative AI, and vendors are systematically misusing it. A meaningful architectural difference separates generative AI bolted on as a conversational wrapper, a chatbot sitting in front of the same static dashboard, from AI natively woven into the analytics workflow.

Traditional BI requires the user to know what question to ask, how to structure it in the platform’s query language, and how to interpret the output. The analyst is the intermediary. The executive waits.

AI-native analytics inverts this model: the system surfaces what the executive needs to know, not just what they knew to ask for.

A CFO who requests a margin report from IT and waits 48 hours for a formatted output is using traditional BI with a modern interface. A CFO who types “Why did EBITDA compress in March?” and receives a narrative answer grounded in live financial data within seconds is using genuinely embedded AI. Same data. Fundamentally different architecture.

The distinction matters because vendors selling chatbot wrappers on legacy BI can, and do, market their product as “AI-powered analytics.” An executive who cannot distinguish wrapper from embedded architecture will make the wrong buying decision and discover the gap only after deployment, when the AI still requires an analyst to frame the right query and validate the output.

Why the Timing Is Now: The 2026 Inflection Point

The shift to embedded generative AI in analytics is not an incremental improvement to BI. 2026 represents a category-level inflection driven by three converging forces that were not simultaneously present even 18 months ago: infrastructure maturity, model capability, and market adoption at scale.

Gartner reports the data science and AI platforms segment grew 38.6% in 2024, the fastest growth rate in the analytics market’s history. The embedded analytics market is projected at $90 billion in 2026. And the adoption trajectory is steepening sharply: Gartner projects 40% of enterprise applications will have task-specific AI agents by the end of 2026, compared to fewer than 5% in 2025. That is an 8x expansion in a single year.

The structural shift is best understood as a move from copilot to analyst. The first generation of AI analytics tools were copilots, assisting a skilled operator who still needed to know what to ask, how to frame it, and how to validate the output. The current generation is beginning to function as analysts. They surface what matters, explain why it matters, and recommend what to do next.

A VP of Marketing who used to spend Thursday afternoon building a campaign performance deck now receives an auto-generated summary with variance explanations every Monday morning. The work did not get faster. It was removed from the workflow entirely.

For executives at growth-stage companies, the timing creates both opportunity and risk. The opportunity: AI analytics that genuinely works can compress the decision cycle from days to minutes, giving smaller teams the analytical capacity that used to require a dedicated data organization. The risk: adopting a solution that markets the shift but has not made it architecturally will lock you into the old model with a new interface. You will not discover the gap until the AI gives your CFO a different answer than your VP of Sales for the same question.

Four capabilities constitute the minimum bar for genuine embedded AI analytics: natural language querying against live data, automated anomaly detection, auto-generated narratives, and predictive analytics. These are architectural requirements and not premium features or future roadmap items. The next section evaluates each one and explains how to test whether a vendor is actually delivering it.

Four Capabilities Separate Actual Embedded AI Analytics From a Dashboard With a Chatbot

Every analytics vendor will check the four capability boxes described above on a sales sheet. The executive’s job is to determine whether those capabilities are architecturally real or cosmetically applied. The architecture behind any claimed speed determines whether the decisions are accurate or just fast.

Natural Language Querying: Architecture Determines Whether You Get Answers or Hallucinations

NLQ is the capability executives encounter first and evaluate most superficially. A vendor demo where an executive types a question and receives a chart feels impressive. What happened behind that demo determines whether the answer is trustworthy.

The risk is specific: an LLM asked to compute revenue figures can produce a confident, plausible, wrong answer. Hallucination risk in an analytics-specific context is categorically different from a chatbot getting a trivia question wrong. A hallucinated revenue number can drive a budget reallocation, a board narrative, or a headcount decision based on data that does not exist.

The architectural solution is the separation of concerns. The LLM handles language interpretation and narrative generation. The semantic layer, a standardized definition layer sitting between raw data and AI queries, handles computation against verified metric definitions. Research shows a semantic layer improves NLQ accuracy by 72.5 percentage points on complex queries, taking accuracy from 0% to 70%.

The problem this solves is widespread, not theoretical. 35.55% of mid-size SaaS companies say keeping metric definitions consistent across teams is not well-served by their current approach (Databox, How Mid-Size SaaS Companies Think About Business Data).

“We had plenty of data, but aligning on what it meant across teams was the challenge. Siloed systems and inconsistent definitions slowed down every decision. We consolidated our Salesforce instances post-acquisition and standardized pipeline definitions to fix it.”

Without a semantic layer enforcing single definitions, every AI query inherits that inconsistency. “Total revenue” might mean gross revenue to Sales, net revenue to Finance, and recognized revenue to the CFO’s board deck. The AI returns a different number for the same question depending on who asks and how they phrase it.

A COO who asks “What is our CAC by channel this quarter?” gets one answer when a semantic layer enforces a single definition of CAC. Without that layer, the AI may calculate CAC differently than the Finance team’s model, and the discrepancy surfaces in the worst possible venue: a board meeting.

Anomaly Detection: The Difference Between Surveillance and Reaction

Traditional dashboards are passive. An executive must log in, check the right dashboard, notice an anomaly in a chart, and investigate the cause. The entire workflow depends on the executive already suspecting something is wrong and knowing where to look.

Embedded AI anomaly detection inverts this. The system monitors continuously, applies statistical thresholds to every connected data stream, and delivers answers with context, not just “this number changed” but “this number changed by this amount, which is outside normal variance, and here is the likely contributing factor.” The executive receives the insight without going in to find it.

Auto-Generated Narratives Turn Metrics Into Executive-Ready Answers

A number without context is not an insight. A chart without interpretation requires the same analytical work the executive was trying to avoid. Auto-generated narratives close this gap by translating metric movements into plain language that matches executive consumption patterns.

The output is not “Revenue: $4.2M (down 15%).” It is “Revenue declined 15% vs. forecast, driven primarily by a contraction in Segment X and underperformance in new logo acquisition in the Northeast region. Segment Y held steady, and expansion revenue partially offset the decline.” That narrative is board-ready. It removes hours of manual synthesis from weekly reviews, investor updates, and cross-functional alignment meetings.

Predictive Analytics Requires Clean Data, Which Is Why Governance Comes First

The shift from backward-looking to forward-looking analytics, from “what happened” to “what is likely to happen next,” is the capability with the highest executive value and the highest data quality dependency. Churn probability scoring, pipeline coverage forecasting, and demand signal detection all require clean, longitudinal data fed consistently into AI models over time.

An executive evaluating predictive capabilities should ask a pointed question: what happens to your prediction accuracy when my data has gaps? The honest answer is that prediction accuracy degrades proportionally to data quality. The failure modes in the next section are not supplementary reading. They are the prerequisite for everything described above.

The Rise of Agentic Analytics: What Comes After “Embedded AI”

Embedded generative AI is the current moment. Agentic analytics, AI systems that monitor continuously, reason through trade-offs, and trigger actions without being prompted, is the near-term trajectory. The distinction matters for evaluation: embedded AI answers questions when asked. Agentic AI acts without being asked.

But agentic analytics is not plug-and-play for most organizations in 2026. The data foundation, governance structures, and integration architecture required for AI to act autonomously, not just answer autonomously, are still maturing. Treat agentic capabilities as a 12-to-24-month planning horizon. A vendor describing them as a current production feature for a mid-market company is either ahead of the market or ahead of the truth. Ask for a reference customer.

What Executives Should Demand From Any Business Analytics Solution With Embedded AI

Most analytics vendors will check every capability box on a sales sheet. The five questions below separate architecturally sound solutions from AI-badged traditional BI. Each question includes what a bad answer sounds like, because bad answers are more revealing than good ones. The vendor conversation is already live for most readers of this article: 52.33% of mid-size SaaS companies say they would consider replacing their current BI/analytics tool (Databox, How Mid-Size SaaS Companies Think About Business Data). The cost of choosing wrong is paid in the year after signature, not the day of.

1. Does the AI query live data, or does it generate plausible-sounding estimates?

A bad answer sounds like: “Our AI uses your historical data to generate insights.”

A good answer sounds like: “Queries run against your live connected data sources with timestamped results you can audit.” The distinction matters because an AI generating estimates from cached or historical data will eventually contradict the live numbers your finance team is reporting. You will not know which version is wrong until someone checks manually.

2. Is there a semantic layer that standardizes metric definitions across the organization?

A bad answer sounds like: “Our AI learns your data over time.”

A good answer sounds like: “Metric definitions are set once, centrally, and every query resolves against those definitions regardless of how the question is phrased or who asks it.” Without this, you have a sophisticated language model operating on ambiguous data. The output is fluent. The accuracy is unreliable.

3. Can non-technical executives get answers without routing through an analyst or data team?

A bad answer sounds like: “Your analysts can use the AI to work faster.”

A good answer sounds like: “Any user with appropriate access can ask a question in plain language and receive an answer independently.” If the AI still requires a technical intermediary to frame, run, or validate queries, you have not removed the analyst bottleneck. You have added an AI layer on top of it.

4. How does the platform handle data governance, access control, and auditability?

A bad answer sounds like: “We take security seriously.”

A good answer names specific certifications, describes role-based access controls, confirms audit logs are available, and documents data residency options. Governance is not a feature checkbox. It is the foundation that determines whether your board and your CFO can trust the numbers the AI produces.

5. What does the implementation path look like, weeks or months?

A bad answer sounds like: “Implementation timelines vary based on your data environment.”

A good answer provides a concrete onboarding timeline with milestones and accountability. If the vendor cannot tell you how long it takes to get from signed contract to executive-ready dashboards, they either do not know or do not want you to know.

The difference between a system that genuinely broadens decision-making access and one that repackages the same analyst bottleneck with an AI badge comes down to architecture, data infrastructure, and governance. Not the sophistication of the language model on top.

How Databox AI Approaches Embedded Generative AI

Most analytics platforms claim to be AI-native. Few are architected that way. Measuring Databox AI against the five evaluation criteria above reveals specific architectural choices rather than marketing language.

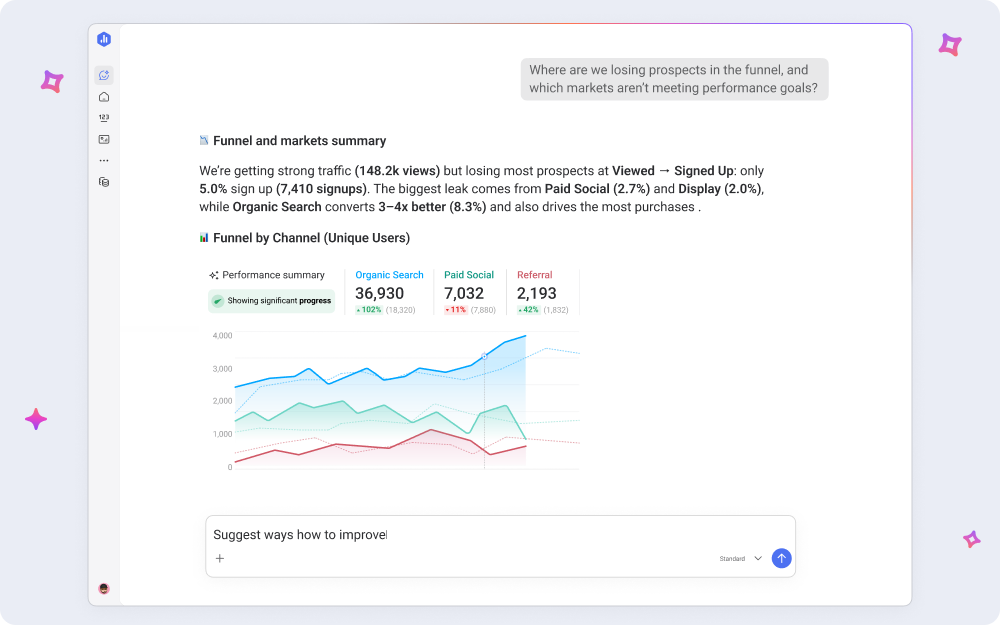

Live data, not estimates. Genie, the AI Analyst inside Databox, answers plain-English questions against live connected data from 130+ native integrations, including HubSpot, Salesforce, and Google Ads. Queries run against real numbers with real timestamps, not cached snapshots or AI-generated approximations.

Semantic layer, not guesswork. Metric definitions are standardized at the platform level through the Metric Library. “What is our CAC?” returns the same answer whether the CEO, the VP of Marketing, or a board member asks it, regardless of how the question is phrased.

Self-service for everyone, not analyst-dependent. Teams ask real business questions in plain conversational language and receive answers with visual context and narrative explanation, no SQL, no pipeline management, no data warehouse expertise required. Genie was built so the person with the question gets the answer directly.

Governance built into the infrastructure. Databox runs on AWS infrastructure with AES-256 encryption at rest, TLS 1.2 or higher in transit, and Amazon VPC isolation. The platform is GDPR-compliant, currently undergoing SOC 2 certification, and operates under security controls aligned with ISO 27001 and the NIST Cybersecurity Framework. Access controls and auditability are architectural choices, not afterthoughts.

Implementation in weeks, not months. Generative AI Performance Summaries auto-generate plain-language commentary and recommendations alongside your metrics, Goals, and Databoards, the narrative layer built for executive consumption, available as soon as your data sources are connected.

Conclusion

The category is loud. Every vendor will tell you their product is AI-native, their AI is embedded rather than bolted on, and their roadmap includes agentic capabilities by next quarter. The five questions in this guide are the test that cuts through the marketing layer. Live data or estimates. Semantic layer or guesswork. Self-service or analyst-dependent. Auditable governance or “we take security seriously.” A concrete timeline or “it depends.”

A vendor that passes all five is doing the architectural work the marketing claims. A vendor that flinches on any of them is selling a dashboard with a chatbot in front of it.

You now have the framework. The next step is testing it.

Frequently Asked Questions

What is embedded generative AI in business analytics?

Embedded generative AI in business analytics refers to AI capabilities natively integrated into the analytics workflow, not added as a separate chatbot or overlay. The four core capabilities are natural language querying, automated anomaly detection, AI-generated narrative summaries, and predictive analytics. “Embedded” means the AI operates as part of the core product architecture, querying live data against standardized metric definitions rather than generating estimates from cached information.

How is embedded AI analytics different from a BI tool with a chatbot?

A BI tool with a chatbot places a conversational interface on top of the same legacy architecture, leaving manual data refreshes, analyst-dependent query validation, and unstandardized metric definitions intact. Embedded AI analytics rewires the architecture itself: the AI queries live data, a semantic layer enforces consistent metric definitions, anomalies surface automatically, and narratives are generated without human intermediaries. The chatbot model changes the interface. Embedded AI changes the workflow.

What is a semantic layer and why does it matter for AI analytics accuracy?

A semantic layer is a standardized definition layer sitting between raw data sources and AI queries, making terms like “revenue,” “CAC,” or “qualified lead” resolve to a single, consistent calculation regardless of who asks or how the question is phrased. Without one, AI analytics can return different answers for the same question asked by different users. Research shows semantic layers improve NLQ accuracy on complex queries by 72.5 percentage points, making them the single most important architectural prerequisite for reliable AI analytics.

What are the biggest risks of using generative AI in business analytics?

The primary risk is hallucination: LLMs generating confident, plausible, wrong answers when asked to compute metrics directly. Architectures that separate language generation (handled by the LLM) from data computation (handled by a semantic layer querying verified sources) mitigate this risk substantially. Secondary risks include inconsistent metric definitions across teams, dirty or siloed source data degrading AI accuracy, and governance gaps that make AI-generated outputs unauditable for board or compliance purposes.

How do I know if my analytics platform truly has embedded AI or just AI features?

Ask five questions: Does the AI query live data or generate estimates? Is there a semantic layer standardizing metric definitions? Can non-technical executives get answers independently? How does the platform handle governance and auditability? What is the concrete implementation timeline? If the vendor answers with generalities rather than specifics on any of these, the AI is more likely a feature badge than an architectural capability.