Table of contents

When teams can’t get trustworthy answers within the decision window, being “data-driven” turns into a queue problem.

TL;DR

- Decision-making slows down when answers travel through a business analyst or RevOps ticket queue — and by the time the data arrives, the decision window has already closed.

- The real challenge in data-informed decision-making is delivering answers quickly while keeping the numbers trustworthy.

- “Self-service analytics” stalled because the tools still required analyst thinking to operate. AI is what finally makes the promise real.

Introduction: the moment the analyst bottleneck becomes visible

The executive team begins the Monday operating review and sees gross margin down 3.2 points week-over-week. They look at the dashboard, then at the RevOps lead, and ask out loud: “Is this real – and if it is, what’s happening and why?”

The room does what rooms always do when the answer isn’t available: people fill the gap with stories. Someone mentions a discount. Someone mentions a fulfillment issue. Someone mentions “seasonality.”

And then comes the part everyone involved in business performance reporting recognizes. A request gets logged. The analyst team is already buried. The earliest ETA is “later this week.” The decision whether to freeze spend, change pricing, or pause a campaign gets made without the answer. Again.

The answer exists. It’s somewhere in the data.

But when the path from question to metric to explanation runs through tickets, backlogs, scattered data, and slightly-misaligned definitions, the analyst bottleneck becomes the ceiling on how fast the company can make decisions.

It’s not just a speed challenge, either. The deeper challenge is ensuring answers arrive quickly and remain defensible. If you can get an answer in seconds but can’t defend the math, you haven’t eliminated the bottleneck, you’ve just postponed it until the next exec meeting.

What’s the real cost of the analyst bottleneck?

The obvious cost is analyst time. But the bigger cost is organizational and decision lag.

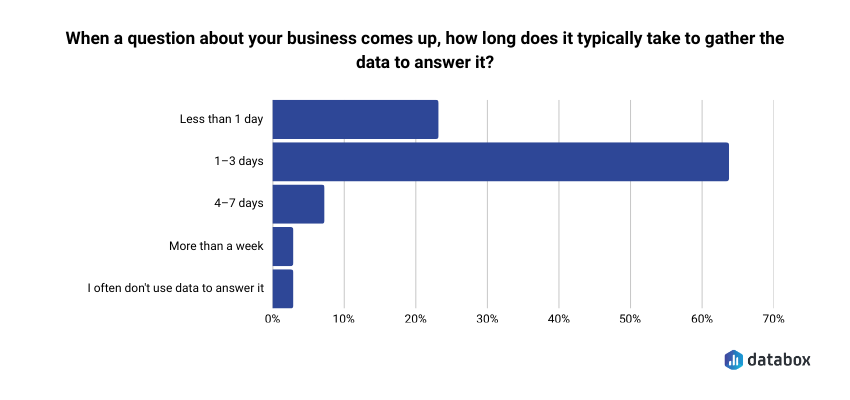

A decision window opens, and the company can’t get to a defensible answer before that window closes.In our recent survey, Time to Insight, over 60% of respondents said it takes at least 1-3 days to answer a typical business question, long enough that in most weekly operating reviews, the decision window has already closed before the answer arrives.

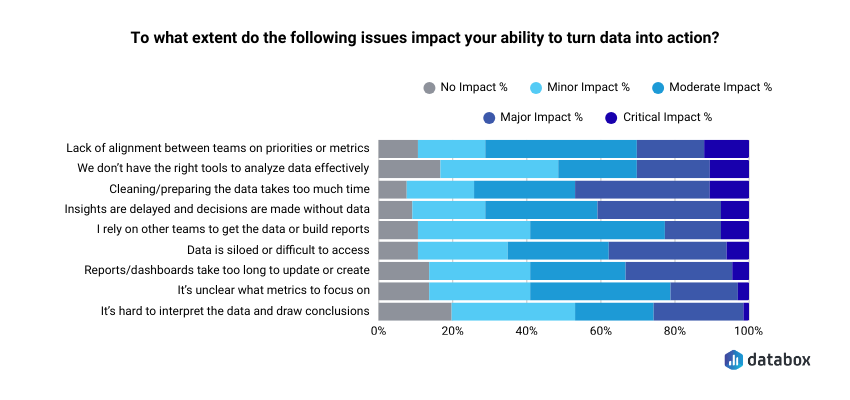

This suggests analysts are overloaded. A lot of that overload is mechanical:

- gathering data

- cleaning and prepping it

- rebuilding recurring reports

- answering the same “what changed?” questions in different meetings

What happens during the delay from data to decision?

By Tuesday, a VP of Marketing is in her pipeline review with MQL-to-SQL conversion down from 34% to 26% and asks: ‘Which campaigns are creating qualified pipeline, not just form fills?’ More digging, more data… another ticket opened.

By Wednesday, a CEO opens the board deck draft after seeing logo churn spike and asks: “Which segment churned, and what’s the common pattern?”

The quest for data-driven answers is never-ending, but with the analytical talent stuck doing mechanical work, leadership still ends up making calls without the numbers.

Why “self-service analytics” is finally real with AI

Self-service analytics promised that leaders like the COO, VP of Marketing, and Head of Sales could answer routine questions without waiting. But in practice, it still meant “you can see charts,” not “you can get explanations you can run the business on.”

Our recent research, Time to Insight, found that roughly 7 in 10 respondents say issues like delayed insights, time spent preparing data, and unclear metrics meaningfully hinder their ability to turn data into action.

The problem was that the tools still required analyst thinking to operate. You still needed to know which question to ask precisely, how to structure it like a query, how to interpret the output responsibly, and how to build or modify the visualization to get there.

That’s self-service with prerequisites. (or, as we like to call it, “BI with baggage.”)

So the promise stalled. Until AI appeared.

What changed with AI (and why you shouldn’t always trust LLMs with your data)

AI changes self-service analytics in two ways: the interface and the operating model.

The change in interface, or how you interact with the data, is fairly familiar, because it’s how all of us have been interacting with LLMs and AI tools already. Instead of hunting through a dashboard hierarchy, a COO can ask in plain, conversational language:

- “Why did gross margin drop last week?”

- “Which product line drove the change?”

- “Was it discounting, costs, or mix?”

And get a clear explanation back.

But there’s a catch most AI analytics tools don’t advertise, and it has to do with the operating model.

“Here is a dirty secret about most AI data tools: the LLM is doing the calculations. It reads your numbers, tries to compute averages, and hallucinates the results.”

That matters because a language model that’s doing your math is essentially a confident guesser. It can produce a number that looks right, reads well, and is wrong — and you won’t know until someone challenges it in a forecast call or board meeting.

Trustworthy AI analytics requires four things to work together:

- The AI takes your question in plain language and explains the answer in plain language.

- A separate computation engine — not the AI — runs the actual calculation against your real data.

- Your key metrics have a single agreed definition, so when a VP Marketing asks for CAC and a CFO asks for CAC, the system isn’t picking between three versions.

- And every answer can be traced back to its source: which data, which time window, which formula — so you can defend it in the room where it matters.

Without all four, the analyst bottleneck remains (it’s just hidden behind numbers nobody can stand behind).

This type of conversational, reliable AI analysis is exactly what we’re building at Databox.

With Genie, Databox’s AI analyst, anyone on the team can ask plain-language questions about their data and get answers instantly, without jumping between dashboards or waiting for someone who “knows the numbers.” Genie works from the standardized metrics already defined in Databox, so every answer is grounded in your actual data instead of AI guesswork.

What does “the end of the bottleneck” actually unlock?

Eliminating the analyst bottleneck doesn’t mean eliminating analysts. It means changing the economics of access.

“The analyst role, as it exists today, will largely evolve… over the next few years… The work that defines the role today is increasingly mechanical; the role will shift from producing outputs to enabling systems.”

What does the end of the analyst bottleneck look like in real life?

- A smaller number of analysts stops being the throughput limit for the company’s questions.

- Teams get answers inside the decision window.

- Analysts spend less time rebuilding the same weekly report and more time hardening metrics, improving data quality, and shaping how decisions get made.

- An endless stream of recurring decisions (budget shifts, staffing moves, pipeline calls, churn interventions) are now led by judgment-grade answers.

In summary: the company can close the loop from “What changed?” to “What do we do next?” without a week of waiting.

Examples: Do you have an analyst bottleneck?

These are the types of questions that show up in real meetings: the ones that trigger data-digging and analyst tickets when the operating model can’t answer them.

CEO

- “Why did churn spike in the last two weeks?”

- “What’s driving NRR change? Expansion, contraction, or logo churn?”

- “Which segment has the highest LTV, and what assumption is that based on?”

- “What’s the forecast risk if the top 10 deals slip?”

- “Are we seeing product-market fit tighten or loosen this quarter?”

VP Marketing

- “Which campaigns are driving qualified pipeline, not just clicks?”

- “Did CAC increase because of CPC, conversion rate, or mix?”

- “Which channel has the highest payback period by cohort?”

- “Where did MQL-to-SQL conversion break?”

- “Which landing pages lost conversion?”

Head of Sales / Head of Revenue

- “Which reps convert trials to paid at the highest rate?”

- “Where are deals stalling by stage, and what’s the pattern by segment?”

- “Is pipeline coverage real, or inflated by low-probability deals?”

- “Did win rate drop because of deal quality or cycle length?”

- “Which accounts expanded last quarter and what did they have in common?”

If your current stack can’t answer these without a human intermediary, you have a decision-latency problem to resolve.

The analyst bottleneck disappears when answers arrive quickly and remain trustworthy enough to act on

The real change is the operating model of how answers are produced and trusted.

Analysts stop being the interface between the business and its own performance. They become the people who make the system trustworthy: defining metrics, maintaining data quality, and ensuring every answer can be explained.

Teams get answers they can trust, delivered in real time, so decisions can happen when they matter, not weeks later.

If you want to see what this looks like in practice, try Genie, our AI analyst. It helps teams that have always had the data, but not always the time or expertise to interrogate it.

Note: This article is based on a SubStack article published by Davorin Gabrovec

Frequently Asked Questions

What is the analyst bottleneck?

The analyst bottleneck happens when business teams rely on a small number of analysts to answer data questions, creating delays that slow decision-making.

Why do self-service analytics often fail?

Many self-service analytics tools still require technical knowledge to query data, interpret results, and build visualizations.

Can AI replace data analysts?

AI changes the analyst’s role. Instead of producing reports, analysts increasingly focus on defining metrics, improving data quality, and ensuring trustworthy analysis.

How does Databox Genie work?

Genie allows teams to ask questions about their existing data in plain language. It’s interpreting the metrics already defined in Databox, so you are getting accurate answers and not AI hallucinations.