Table of contents

Automated reporting saves your team’s time. AI analytics saves your client relationships — and wins you new ones.

Automated reporting for clients means your agency pulls performance data from every agreed source through APIs into one system, applies consistent metric definitions and formatting, and delivers the same client-ready view on a schedule — without anyone copying and pasting.

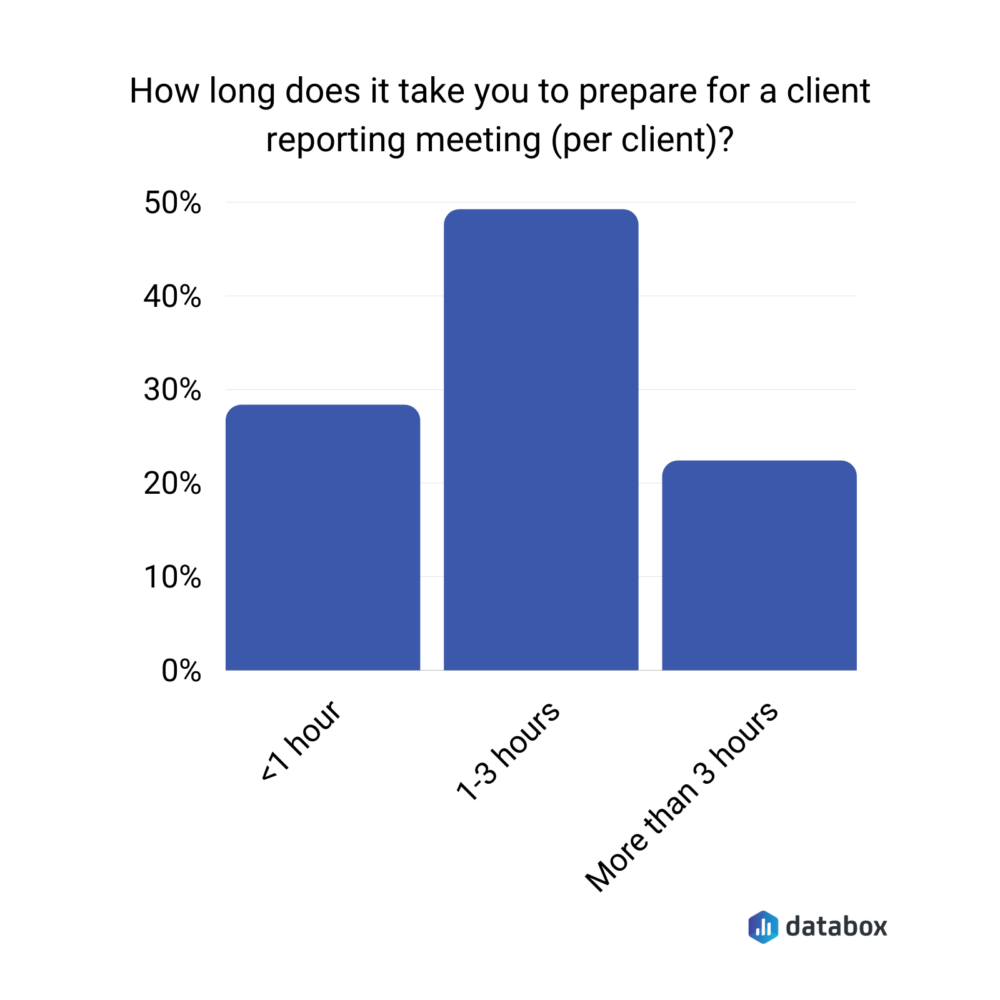

According to a Databox survey, 49% of agency teams spend 1–3 hours preparing for a single client reporting meeting per client. Automation solves that. But it does not solve the client problem.

Automation removes the compilation labor. AI analytics removes the interpretation labor — and interpretation is what clients actually pay for. The agencies pulling ahead in 2026 are the ones using AI to turn their client dashboards into answers, and using those answers to win new clients before the contract is even signed.

TL;DR

- Automated reporting pulls client data from multiple sources into one system and delivers it on a schedule without manual work. According to a Databox survey, 49% of agency teams spend 1–3 hours preparing for a single client meeting — automation removes that labor.

- Automation answers “what happened.” AI analytics answers “what changed, why, and what to do next” — which is the question clients actually ask. The interpretation layer is what differentiates agencies in 2026.

- Genie, Databox’s AI analyst, lets teams query client data in plain language, surface anomalies automatically, and generate narrative summaries grounded in accurate metrics.

- The six best practices for AI-powered client reporting: (1) centralize data before automating, (2) replace static reports with proactive alerts, (3) structure every report around one business question, (4) use AI to scale account capacity without adding headcount, (5) demonstrate AI reporting live in pitches, (6) measure ROI in two buckets — capacity recovered and revenue protected.

What Automated Reporting for Clients Actually Means in 2026

A reporting workflow qualifies as automated when an account manager can open a client dashboard on Monday morning and see the same spend, leads, revenue, and CAC figures that will appear in the month-end recap. No refresh required. No waiting.

The efficiency case is straightforward. According to a Databox survey on client reporting meetings, 49% of agency teams spend 1–3 hours preparing for a single client reporting meeting per client — before a single insight has been delivered. Multiply that across 15 accounts and reporting mechanics become a part-time job. That is a fully solvable problem.

But solving the time problem does not solve the client problem. Automation removes the compilation labor. It does not remove the interpretation labor — and interpretation is what clients are actually paying for.

“Our client reports usually take around a few hours for each team member involved in the account to carry out, extracting that all-important information to pop into the reports.”

Why Automation Alone Is No Longer Enough

Automated reporting solved a 2022 problem: producing a consistent deck without burning staff time. Agencies that stop there are still walking into the same client conversation every month, because the report answers ‘what happened’ while the client asks ‘what should we do.’

A client does not keep an agency because the numbers arrived on time, but because the agency spotted a problem early, explained the cause in plain language, and acted before the quarter closed.

“There are loads of backend details you can spare your clients to avoid an unnecessary amount of back and forth. To avoid this, synthesize the most pertinent information for your client and keep them on a need-to-know basis.”

The competitive dynamic has shifted. When every agency can ship a dashboard on the same cadence, speed of delivery stops being a differentiator. What differentiates now is the interpretation layer — the piece that turns a chart into a recommendation the client can defend to their own finance team.

The new gap is not manual versus automated. It is the difference between delivering a dashboard and delivering an answer. Agencies that close that gap are the ones clients call strategic partners. The ones that do not are the ones competing on price.

“It’s critical to not report “data for the sake of data.” Every piece of data reported needs to have a clear reason for being reported, and should come with some sort of insight tied to commercial results.”

How AI Analytics Changes What Your Reporting Delivers

AI analytics in an agency context means software that helps you interpret performance signals across sources, surface exceptions that matter, and translate changes into plain-English explanations — without a human rebuilding the logic every month.

Rule-based automation triggers on rules you already know. AI assists when you do not know what to look for yet.

Consider what changes in a client review when the first slide stops being a channel performance table and starts being an answer:

“CAC dropped 18% month over month because branded search conversion rate rose after the landing page change, while prospecting spend stayed flat. Recommendation: hold Search budget steady, shift 10% from Prospecting to Retargeting for two weeks, and watch demo-to-close rate.”

That is a different conversation. The client is not asking what the numbers mean. They are deciding what to do next — which is the conversation where agencies justify their retainers.

This is where Genie, Databox’s AI analyst, fits. Genie lets your team ask questions in plain language about client performance and get answers grounded in your standardized metrics inside Databox. It surfaces anomalies automatically, generates narrative summaries you can use in an email update or a monthly review doc, and flags performance changes before your client notices them.

One accuracy point that matters in client reporting: the AI should never do your math. Clients do not forgive confident wrong numbers. Genie explains results while Databox’s analytics engine runs the calculations, so an account manager can quote CAC, ROAS, and conversion rate without crossing their fingers.

The sections that follow are the six practices that make this shift reliable and scalable — from the data foundation through to how the reporting system pays for itself.

Best Practice 1 — Centralize Your Data Before You Automate Anything

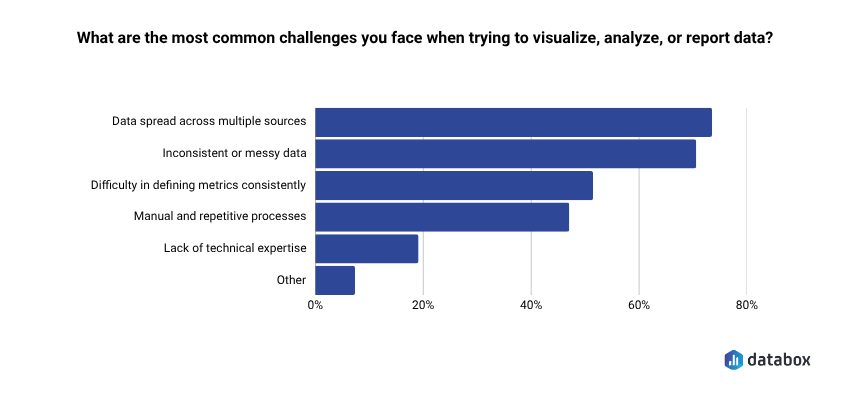

Most agencies are not starting from a clean data infrastructure. According to the Databox Time to Insight survey, 73% of teams say data spread across multiple sources is their top reporting challenge, and 72% cite inconsistent or messy data as a regular obstacle.

The starting point for most small agencies is Google Slides, a shared spreadsheet, and a folder of platform screenshots — not a unified data layer.

That is not a problem. It is just the actual starting line.

Centralization is the prerequisite for everything that follows — not because it makes your dashboards look better, but because you, your client and AI need consistent inputs to get trustworthy outputs. Genie pulls from a unified data layer with agreed metric definitions, so its anomaly detection and recommendations are defensible in a client meeting. When data is pulled from silos with conflicting definitions, it produces noise.

Clients lose trust when two slides in the same deck disagree — because one source used platform-reported conversions and another used CRM-qualified leads. That credibility hit is preventable.

Start with decision metrics, not every metric

Pick 8 to 12 metrics that drive client decisions: spend, revenue, ROAS, CAC, conversion rate, lead-to-MQL rate, MQL-to-SQL rate, pipeline, and churn for subscription clients. Lock definitions before building dashboards. Everything else can live in an appendix.

Build a client-level metric dictionary

A metric dictionary becomes the contract for reporting. When a client asks why Shopify revenue does not match GA4, the answer points to a documented attribution choice — not a scramble. This also makes onboarding faster: paste the dictionary into the kickoff doc and the client starts the relationship with aligned expectations.

Centralize by client segment, not by tool

An agency supporting ecommerce clients and B2B lead gen clients will not standardize on the same metrics. Build a ‘commerce pack’ and a ‘lead gen pack.’ Apply templates by segment. This is faster to maintain and easier to explain in a pitch.

Best Practice 2 — Replace Static Reports with Proactive Intelligence

Static monthly reporting trains clients to judge you on last month’s outcome. Proactive intelligence trains clients to judge you on how early you spot issues and how clearly you explain trade-offs.

A client relationship turns fragile when the first time a client hears bad news is the scheduled reporting call. You cannot relationship-manage your way out of a surprise 30% lead drop when the client noticed it first in their own CRM. The reactive loop — deliver the report, schedule a meeting, explain what already happened — is the churn trigger most agencies never connect to reporting behavior.

“In the past 12 months, the main reason clients have hired us or switched from another agency has been the desire for better alignment with their growth goals and a stronger ROI. Many clients felt their previous agencies weren’t providing proactive strategies or clear reporting on performance metrics. They sought an agency that could offer a tailored approach to meet their specific objectives and communicate results transparently, which we prioritize.”

Proactive intelligence changes the dynamic in two concrete ways.

Alerts tied to pacing, not vanity metrics

Alert on budget pacing, CPA drift, and conversion-rate drops — signals that constrain what you can do before month-end. Not impressions. Not reach. Things that force a decision this week.

Plain-English explanations that land in Slack or email

A client does not need another dashboard login. They need a message that says: ‘Meta spend paced 12% ahead of plan this week while Shopify revenue stayed flat, so blended ROAS will miss target unless we throttle Prospecting by Friday.’ Genie supports this shift directly — your team can ask Genie what changed since last week, get an explanation in client language, and send it as a proactive note between reporting cycles, not only at them.

The agencies that build this habit stop being reporters and start being advisors. That is a different retainer conversation.

Best Practice 3 — Make Every Report Answer a Business Question

Clients open a report to reduce uncertainty. A report that opens with a wall of channel metrics forces the client to do analysis work they did not hire you for. That friction is invisible to the agency and obvious to the client.

A question-led structure keeps everyone honest, because the agency can only include metrics that answer the question. For most client segments, the standing question is simple:

- Ecommerce: Are we on track to hit this month’s revenue target at an acceptable blended CAC?

- Lead gen: Are we on track to hit qualified pipeline target, and which channel is driving the change?

Use a ‘one question, one answer, one action’ front page

Open with a single answer: ‘You are on pace to hit revenue target, but blended CAC rose because retargeting frequency increased while new customer conversion rate fell.’ The action follows immediately. Channel tables belong in an appendix the client can ignore unless a specific channel is causing the answer.

Use AI to keep the narrative consistent across clients

An account manager handling ten or more clients cannot handwrite tight narratives for every account without quality drift. Genie can draft the first pass of the narrative summary so a human reviews tone, risk, and next steps — rather than writing from scratch at 11pm on a Wednesday.

This structure is also the most demonstrable thing you can show in a pitch. Most agencies promise superior service. This lets you show a live example of how you communicate. That is a different kind of credibility.

Best Practice 4 — Use AI to Scale Capacity Without Adding Headcount

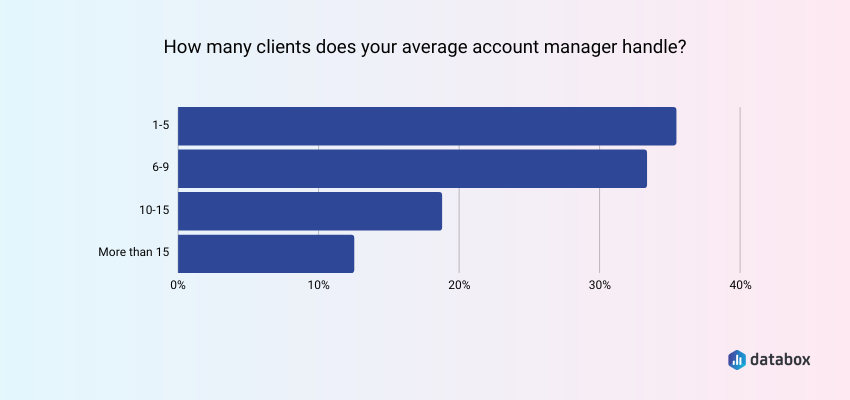

According to Databox research on agency account management, nearly 70% of agencies report their account managers currently handle up to 10 accounts.

AI changes that ceiling by handling the work that makes high client loads unsustainable: recurring narrative generation, anomaly monitoring, and first-pass Q&A. Automation removed the data-pulling work. AI removes the thinking work that scales linearly with client count — but only when the AI layer handles first-pass interpretation for recurring questions, so humans spend their time on exceptions and decisions.

For a founder or account manager running a lean book of business, that shift is the difference between being perpetually reactive and occasionally being strategic.

The capacity math is concrete. If an account manager currently handles 8 clients — squarely within the typical range most agencies report — and AI-assisted workflows allow them to push toward the 12–15 range that more experienced, better-tooled AMs sustain, that is $12,000–$21,000 per month in additional revenue on the same salary line. The hours recovered from automated reporting and AI-assisted narratives are the fuel for that expansion — but only if those hours go into client strategy rather than getting quietly absorbed.

The accuracy requirement matters here at scale. A stretched team cannot manually sanity-check every number in every narrative. Databox’s architecture addresses this directly: Genie explains results while the analytics engine runs the calculations. At scale, that separation is not a nice-to-have — it is what keeps you from sending a client a confident wrong number at 6pm on a Friday.

The role shift for senior team members is also worth naming. When AI handles recurring explanation work, experienced account managers move from producing reports to owning metric definitions, investigating anomalies, and designing the client decision cadences that differentiate the agency. That is a better use of their skills and a more defensible value proposition to clients.

Best Practice 5 — Turn Your Reporting Capability Into a Sales Asset

Most agencies pitch reporting as a hygiene factor. ‘Monthly dashboards, weekly updates, custom reporting on request.’ Every competitor says the same thing, so prospects treat it as table stakes and stop listening.

The reporting system you have built — centralized data, AI-generated narratives, proactive alerts — is not a back-office efficiency gain. It is demonstrable proof of differentiation, and you can show it in a pitch meeting before the contract is signed.

Show the system live, not in a slide

Ask the prospect for read-only access, exports, or sample data before the pitch. Build a sample workspace with their key metrics. Then in the meeting, say: ‘Ask us any question you would ask after month one.’ Answer it live, using the same AI-assisted workflow the client will get post-close.

Genie supports this directly. Your team can use it to answer prospect questions in plain language without disappearing for two days, produce a narrative summary that demonstrates how you communicate between meetings, and surface anomalies in the prospect’s own data that prove you will catch issues early. A prospect who sees their numbers, analyzed in your system, explained in plain English, trusts the agency’s operating model — not just its case studies.

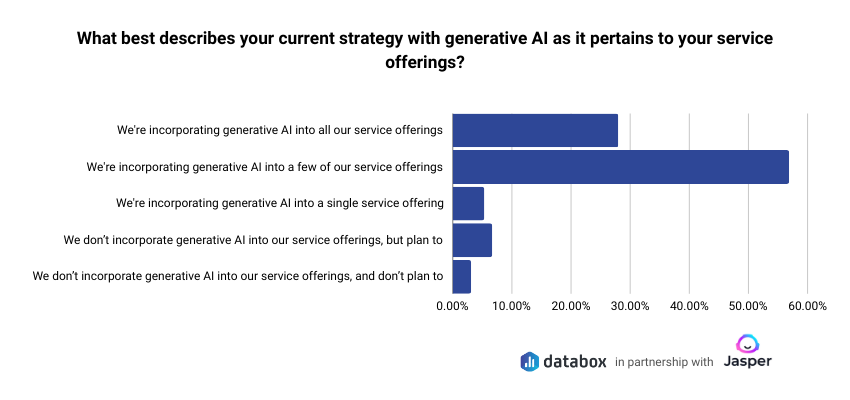

According to Databox’s research on the role of AI in marketing 89% of small businesses in marketing and advertising are already actively implementing AI. The agencies that can demonstrate a working AI analytics workflow are not selling a future capability. They are showing a present-tense operating advantage that the prospect’s current agency cannot match.

Document the pitch-to-close conversion lift

Track whether prospects who see a live AI demo convert at a higher rate than those who see a standard credentials deck. Even rough data here — two or three additional closes per quarter — becomes part of the ROI case in the next section.

Best Practice 6 — Measure the ROI of Your Reporting Infrastructure

Reporting tools feel expensive when agencies treat reporting as overhead. They feel like an investment when agencies connect them to the numbers that actually govern the business: margin, retention, and new business close rate.

A solid internal business case has two buckets.

Recovered capacity

Calculate current reporting hours per account manager per month. Model hours after automation and AI-assisted narratives. For a team member spending 20 hours a month on reporting mechanics across their client book, even a 50% reduction returns 10 hours — enough for two additional proactive client touchpoints per week, or meaningful time on new business.

The key decision: reinvest part of the savings into proactive client work rather than absorbing it silently. Agencies that do this see retention effects. Agencies that just quietly take the time back see efficiency gains but miss the relationship upside.

Growth impact: retention and sales

Proactive alert workflows reduce the ‘surprise’ moments that trigger churn conversations. A client who hears about a problem from you before they notice it themselves is in a fundamentally different emotional state than one who brings it to you. That difference does not always show up in a quarterly NPS score, but it shows up in renewal conversations.

On the sales side, if a live AI demo increases your pitch-to-close rate by even 10%, and your average retainer is $3,000 per month, one additional close per quarter is $36,000 in annual recurring revenue. Against a monthly tooling cost of a few hundred dollars, the payback math is usually obvious.

Build the two-column model: cost removed (reporting hours recovered at your loaded hourly rate) and revenue protected and added (retention improvement plus sales conversion lift). Show break-even. Most agencies find it within a quarter.

Conclusion

Automation fixes the mechanics of reporting, but clients never bought mechanics. They bought confidence — that someone will catch problems early, explain trade-offs clearly, and point to the next action before the month closes badly.

An agency that treats AI analytics as the interpretation layer, grounded in standardized metrics and delivered proactively, turns reporting from a deliverable into a product. That product scales delivery without scaling headcount, strengthens retention conversations without heroics, and gives new business a live proof point you can show in the pitch — not promise in a slide.

Frequently Asked Questions

How does AI analytics help agencies win new clients, not just serve existing ones?

AI analytics helps in sales when the agency can demonstrate interpretation live, not just promise better service. Showing a prospect their own data — analyzed and explained in plain language using the same workflow the client will get post-close — builds trust in the agency’s operating system, not just its credentials. A prospect who asks a question and gets an immediate, grounded answer experiences the agency’s capability rather than being told about it.

What is the difference between automated reporting and AI-powered reporting for agencies?

Automated reporting pulls data into a consistent view and delivers it on a schedule without manual work. AI-powered reporting adds an interpretation layer on top — anomaly detection, narrative summaries, and plain-English Q&A so the report answers ‘what changed, why, and what to do next.’ Automation ships numbers. AI helps the agency ship decisions.

How many clients can an account manager realistically handle with AI-assisted reporting?

It depends on client complexity and channel mix, but the bottleneck AI addresses most directly is interpretation time — the recurring work of turning data into narrative. An account manager who currently spends 15 to 20 hours a month on reporting across their client book can often support 30 to 40% more accounts if AI handles first-pass narrative generation and proactive alert drafting. Model it against your own team’s actual hours before projecting headcount savings.

Will clients trust AI-generated insights, or will they want human analysis?

Clients trust outcomes when the numbers stay consistent and the agency stands behind the recommendations. The right model is AI-assisted, not AI-replaced: a human owns the client relationship, the action plan, and the risk calls. The AI handles first-pass interpretation and anomaly flagging. Clients also need to know the underlying math is accurate — AI should explain results while a real analytics engine runs the calculations.

How long does it take to see ROI from switching to AI analytics for client reporting?

Operational ROI — hours recovered from manual compilation — typically appears in the first reporting cycle after automation is in place. Strategic ROI takes longer because it requires changing how reviews run, building proactive workflows, and letting retention improvements compound. An agency that tracks hours saved and connects proactive touchpoints to renewal conversations can usually build a defensible payback case within one to two quarters.