Table of contents

Running the perfect Facebook ad is harder than it sounds.

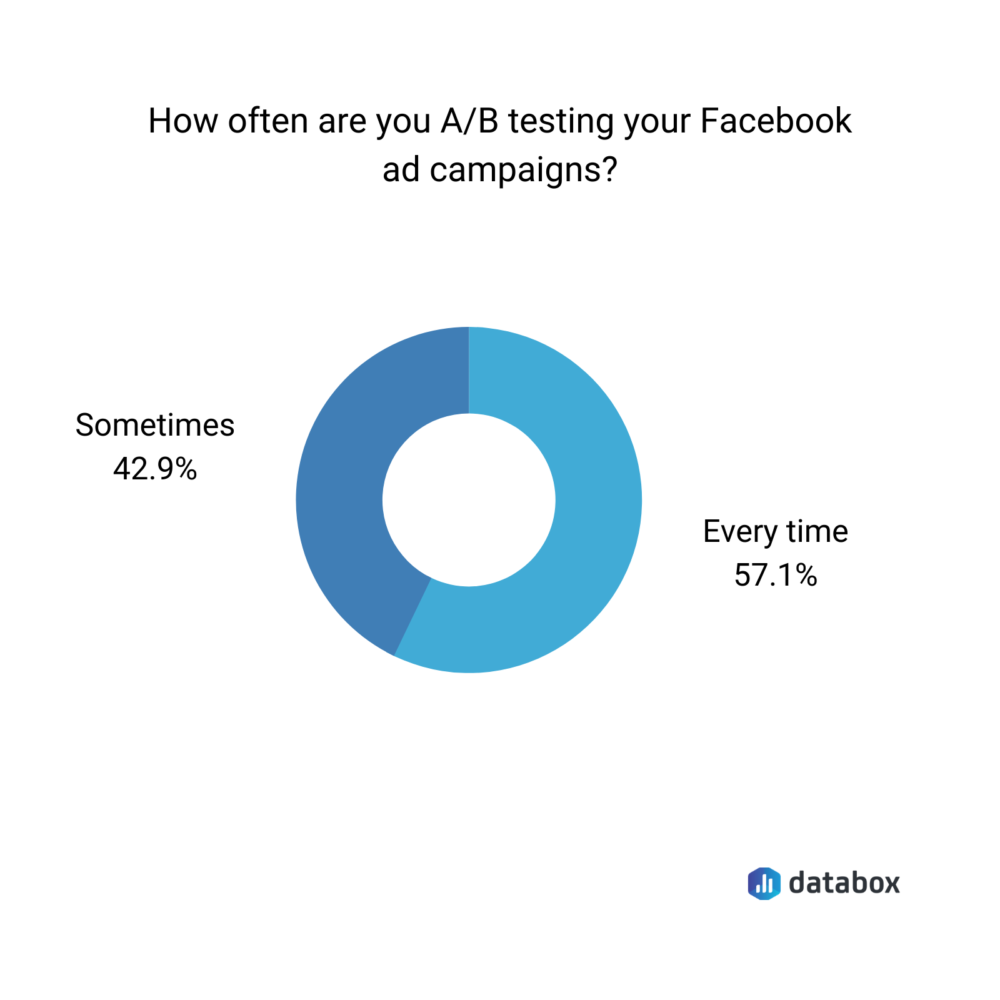

There are so many elements to consider that it can be hard to determine whether or not your strategy yields the desired results. But that’s where Facebook Ad testing comes in. A great way to know how your Facebook Ad is performing, and if there’s room for improvement, is to run an A/B test.

Split testing, among other things, can indicate that maybe a different call to action would fit better or that maybe adding a different photo would leverage more clicks. Whatever the case may be, performing a Facebook A/B test could let you know exactly what your ad needs to reach a wider audience and give you more bang for your buck.

Ready to get started? Learn something specific when you jump ahead to:

How to Set Up A/B Testing on Facebook Ads

When it comes to setting up your Facebook Ads, there are a few ways you can go about it. The most common approach is through the Ads Manager Toolbar.

To use this approach, follow these steps:

- Once logged into the Facebook Ads Manager, select the Campaigns tab and click A/B Test.

- You’ll then choose the variable you want to test, which includes: audience, creative, placements, delivery optimization, more than one, and product set.

- After you’ve chosen a test type, you can check on the status in Ads Manager at any time, as well as set how long you want the test to run.

To check the status or progress of your A/B test in the Ads Manager, head over to the “Account Overview” tab and click on the icon that looks like a chemistry beaker.

Facebook A/B Testing Best Practices

You’ve set up your A/B test within Facebook Ads… now what? If you’re unsure where to start, you’re not alone. Take advantage of these 12 Facebook A/B testing tips to make the most out of your test.

Want to learn more about a specific tip? Jump ahead to:

- Limit the changes you make

- Change the creative

- Take advantage of the Facebook algorithm

- Choose variables wisely

- Utilize Dynamic Creative

- Put a limit on ad spend

- Split your audience

- Consider the time frame

- Test your call to action

- Focus on a specific goal

- Consider human psychology

- Implement your own code

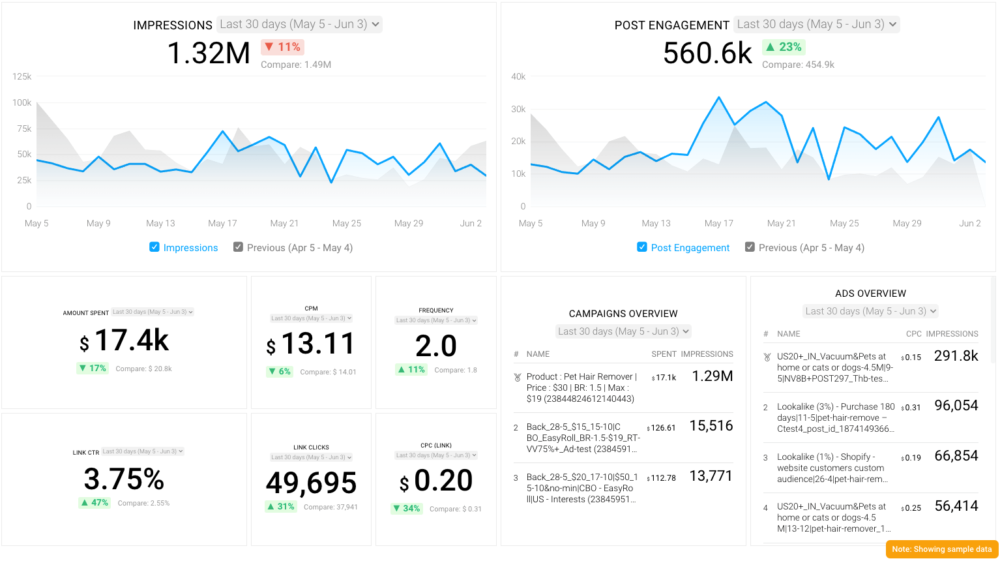

PRO TIP: What’s the overall engagement of your ad campaigns?

Want to make sure your Meta ads are performing and trending in the right direction across platforms? There are several types of metrics you should track, from costs to campaign engagement to ad-level engagement, and so on.

Here are a few we’d recommend focusing on.

- Cost per click (CPC): How much are you paying for each click from your ad campaign? CPC is one of the most commonly tracked metrics, and for good reason, as if this is high, it’s more likely your overall return on investment will be lower.

- Cost per thousand impressions (CPM): If your ad impressions are low, it’s a good bet everything else (CPC, overall costs, etc.) will be higher. Also, if your impressions are low, your targeting could be too narrow. Either way, it’s important to track and make adjustments when needed.

- Ad frequency: How often are people seeing your ads in their news feed? Again, this could signal larger issues with targeting, competition, ad quality, and more. So keep a close eye on it.

- Impressions: A high number of impressions indicates that your ad is well optimized for the platform and your audience.

- Amount spent: Tracking the estimated amount of money you’ve spent on your campaigns, ad set or individual ad will show you if you staying within your budget and which campaigns are the most cost-effective.

Tracking these metrics in Facebook Ads Manager can be overwhelming since the tool is not easy to navigate and the visualizations are quite limiting. It’s also a bit time-consuming to combine all the metrics you need in one view.

We’ve made this easier by building a plug-and-play Facebook Ads dashboard that takes your data and automatically visualizes the right metrics to give you an in-depth analysis of your ad performance.

With this Facebook Ads dashboard, you can quickly discover your most popular ads and see which campaigns have the highest ROI, including details such as:

- What are your highest performance Facebook Ad campaigns? (impressions by campaign)

- How many clicks do your ads receive? (click-through rate)

- Are your ad campaigns under or over budget? (cost per thousand impressions)

- What are your most cost-efficient ad campaigns? (amount spent by campaign)

- How often are people seeing your ads in their news feed? (ad frequency)

And more…

You can easily set it up in just a few clicks – no coding required.

To set up the dashboard, follow these 3 simple steps:

Step 1: Get the template

Step 2: Connect your Facebook Ads account with Databox.

Step 3: Watch your dashboard populate in seconds.

1. Limit the changes you make

Starting off the tips from our experts is the tip we received the most — limit the changes you make in your A/B test to just one.

For this, Yoram Baltinester at Decisive Action Workshops explains, “This sounds basic, but I see that mistake over and over again: when you A/B test, only change one thing. This may be subtle but so are its implications.

For example, changing a photo in your ad and moving it from the right to the left side of the ad are two changes. The result might be worse than the original, even if moving the location of the photo is a brilliant change because the new photo was a poor choice. You would miss out on the opportunity to test the new location with the original photo, which might have converted better than the original.”

Adding to this tip is John Ross from CPA Prep Insights. “My best tip for A/B testing Facebook ad campaigns is to split test only one variable at a time, then select the winner from the split test, and move on to the next variable until you optimize your campaign. If you split test multiple variables at once and one campaign significantly outperforms the other, you’ll never know which variable caused the better performance. Was it a different audience? Differently creative? You need to figure out the ideal setting for each variable one at a time so you can drill down to the overall best campaign.

For example, in a recent campaign promoting our CPA exam prep courses, we did just this — split testing one variable at a time in one-week increments. Once we narrowed down the best creative after split testing three, we moved onto split testing different groups. Then once we got the ideal audience pinned down, we even tested ad placement. Bottom line, it is best to tinker in short increments one at a time,” explains Ross.

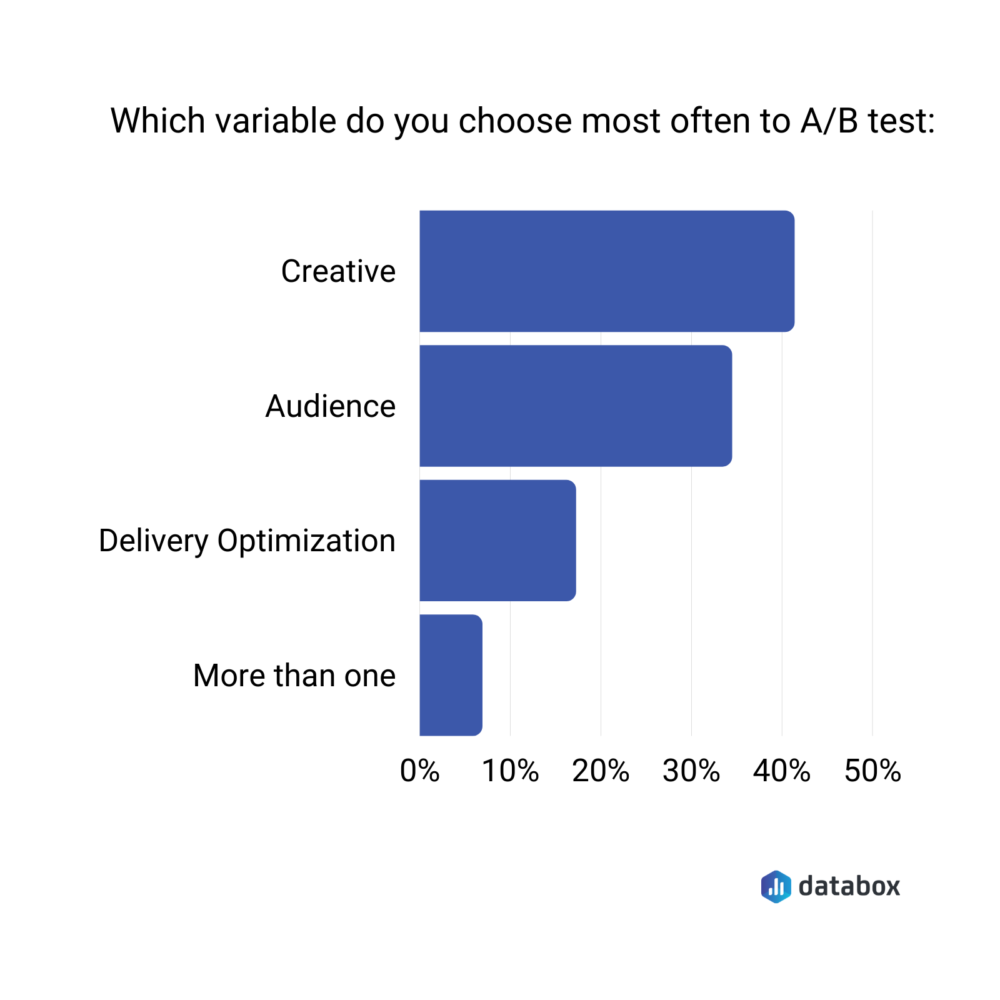

Interestingly, both agencies and SMBs split test design more often than other elements according to this study on Facebook ads performance.

Another expert elaborating on why it’s so important to only change one detail at a time is Logan Haggerston from HCB Solar. Haggerston shares, “To get the most out of A/B testing with Facebook ads, we test one variable at a time to find the most effective format, placement, and audience.

For example, does a question or a call to action work better in headlines? To test this everything else about the creative elements and setup will be the same to produce the most accurate results possible. We find a call to action works best in headings, but what about the ad text? Does longer or shorter text perform better? Once we’ve tested that and made changes, we can look at images – does a single image or multiple images perform better? And so on the testing goes, until you have a fully optimized ad template that you know gets results. This is a lot of time upfront, but saves you time and guessing over the long term.”

Unsure which element to run a test on first? Csilla Borsos from Creatopy recommends keeping your goals in mind as you decide. “An important strategy for A/B testing your Facebook campaigns is focusing on only one variable at a time. For example, if you want to see the difference between your ads, audiences, or maybe your landing page, you shouldn’t include all these variables in your test at once. Start with what’s most important for your goals. Create an A/B test for, let’s say, your ads first. When I want to see how an older headline performs compared to a new one, I duplicate the ads and change only the headlines. Everything else should remain identical. This is important because if you change other elements as well, you won’t know for sure that the new headline is the reason your ad performs better/worse than before,” explains Borsos.

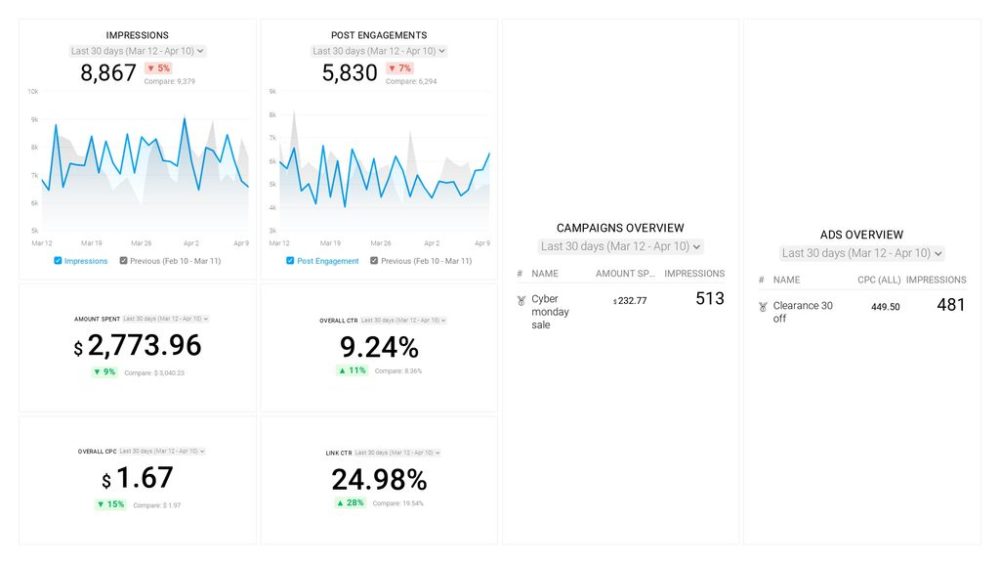

Editor’s note: Identify your most important variables with this Facebook Ads Campaign Review dashboard that provides you with insights about ad engagement, click activity, money spent, and much more.

2. Change the creative

Going hand in hand with the above is the fact that, when running an A/B test, to update the creative first.

Margaret Geiger from Twelve31 Media explains, “When A/B testing Facebook ads, it’s important to keep the ads under the same campaign but change the creative. For example, if you are an oceanfront hotel and are promoting bookings, one ad should showcase the interior of the hotel and one showcase the exterior, or one showcase the ocean view from the balcony, and one showcase the pool. You’ll be able to see what photos and videos are more well-received by your audience and you’ll know which ads to optimize and use moving forward so you aren’t overspending.”

PRO TIP: Changing ad-creative and adjusting the target audience can drastically reduce Facebook Ads CPM.

3. Take advantage of the Facebook algorithm

Whether you love it or you hate it, it could play in your best interests to take advantage of the Facebook algorithm as you run your A/B test.

For this, Sam Harper at Sam Harper.co recommends, “Trust the algorithm. Facebook knows who your customer is and their algorithm works. We don’t use the A/B test functionality but accomplish the same purpose through Facebook’s campaign budget optimization (CBO). We’re trusting the algorithm to determine the best way to spend our budget across various ad sets and creative ways.”

4. Choose variables wisely

If this is your first Facebook A/B test, and you’re unsure where to start, always think about what the most important element of the ad is for you and your organization.

Meara McNitt at Online Optimism elaborates on this so say, “Start big and work your way into the nitty-gritty when choosing what to test. What’s more important, finding out if a person smiling makes a difference, or finding out if you’re using the right CTA? Pick the variables that matter most to your campaign and find answers to those first.”

5. Utilize Dynamic Creative

If you’re interested in utilizing the Dynamic Creative feature for your A/B test, Drew Estes from Massview breaks it all down for you.

“Use Dynamic Creative! Facebook gives you the ability to add multiple variations of ALL your creative assets (that means images, headlines, descriptions, and text, and even call to action buttons). So add all the creative you want to test, then run your ads until you’ve gotten significant data.

Then, under the Ad Set level, select Breakdowns and click ‘By Dynamic Creative Element’ to see what performed best in each creative category. From there you can delete what’s not working, and even start new experiments.

We recommend A/B testing pay-per-click campaigns to all of our Ecommerce clients at Massview because it’s so effective at improving ad results. This Facebook strategy is especially useful if you’re an Ecommerce merchant selling physical products because you can get much more immediate conversion data (purchases), so you can easily optimize for sales rather than just clicks or leads,” explains Estes.

6. Put a limit on ad spend

No matter how big or small your budget is, it’s important when A/B testing on Facebook to implement a limit on your ad spend.

“When you’re A/B testing campaigns, you must cap the ad spend for each variation, so you can fairly compare the performance under the same spend. A common mistake is to let the algorithm decide using CBO (Campaign Budget Optimization), and usually it will put all the budget in the asset that performs the best initially, and rule out the other variations too soon,” shares Felix Yim at GrowthBoost.

7. Split your audience

Still unsure which element to change in your A/B test? Alistair Dodds at EIC Digital Marketing Agency recommends splitting your audience first.

Explaining further, Dodds shares, “Split test your audiences first. Ensuring you are targeting the right audience with the right message is the key to success. So we always start with split testing audiences like Lookalike 1% vs. Interests, or Lookalike 1% vs. 2%. The goal is to let the data lead you to the right audience rather than letting your subjective opinion get the better of you and lead you to the wrong audiences.”

8. Consider the time frame

Another element to pay close attention to when running Facebook A/B tests is the time frame.

For instance, Catriona Jasica at Top Vouchers Code explains, “One of the effective tips for A/B testing your Facebook ad campaigns is to use an ideal time frame. If you are not sure about the right time frame to set, then the best way would be to start with three or four days. Tests fewer than this time frame might give inconclusive results.”

Adding to this tip is Zoe Gadd at Vertical Leap. “Your split test needs time to statistically determine a winner. This can be achieved in a few days to a few weeks, depending on your audience size. Be mindful of the fact any edits during the ‘learning phase’ will reset the process back to the beginning, so as tempting as it may be, hold off on those tweaks!” shares Gadd.

Finally, Ansh Gupta at BuySellEmpire recommends that users always make and use ideal time frames and thinks that four days is the sweet spot. “When setting up your A/B test, you have the option to run it for up to 30 days. The time frame is flexible in terms of what your aim is. However, I’d suggest running the test for four days, as it is aligned with Facebook Business Center’s guidelines. Moreover, four days are more than enough for technology to run the test and produce accurate results. You’ll have sufficient data to analyze as well,” Gupta shares.

9. Test out your call to action

While a few experts have already mentioned call to actions, we think it deserves its very own call-out… pun intended.

When it comes to A/B tests on Facebook, changing up and testing your call to action is crucial if you want to see results. Melanie Musson at ClearSurance.com elaborates further to say, “Test your call to action. Arguably, your CTA is the most important part of your ad campaign and small changes sometimes affect the result in big ways. When A/B testing CTAs, make sure your comparison only contains one difference. That way, you can pinpoint the detail responsible for improving or hindering your ad.”

10. Focus your test on a specific goal

Everything about your Facebook A/B test, from what you decide to change to your ad spend, should always center around a specific goal that you have in mind for the end result.

For this tip, Scott Nelson at MoneyNerd explains, “The most effective tip would be to focus on a measurable or clear hypothesis. So, form a question in which the test results will provide a clear and measurable answer to.

For example, ‘does enlarging the link to the website improve conversion’ or ‘does image X retain more views’. Then with the results, you will be provided with a clear answer to your question and hopefully some measurable results for you to take forward into the next test.”

Adding to the importance of having a specific goal in mind is Lee Cris at Cloom Tech. Cris elaborates to say, “It would help if you understood what your ultimate goal is at the end of the campaign. That will advise you of the role and route to take when formulating your strategy. Equally, it will help you find better results after pairing your concerns and the target market you are looking to connect with.”

Finally, Grace Woinicz from The Brilliant Kitchen suggests using a hypothesis method when implementing an A/B test. “A/B testing is a straightforward testing method that shouldn’t be complicated with too many specific requirements. Similarly, your hypothesis should be clear and easy to understand. A hypothesis should be made keeping A/B testing in mind so it can be answered appropriately. Additionally, you should consider choosing the right type of A/B testing that is the correct option to answer your hypothesis. Using a simple hypothesis is useful because that helps you achieve measurable results from your tests. Measurable results are then helpful for bringing improvements to your ad campaigns,” explains Woinicz.

11. Consider human psychology

A more interesting approach was suggested by Gareth Bain from Got Legs Digital. Bain suggests really taking a deep dive into how your audience thinks in order to get their attention in the best way possible.

“What we have found works well for our clients is understanding the human psychology behind people’s attention. As human beings, we process information in three different ways – THINK, FEEL, and KNOW. When you create your adverts you need to use a mixture of these three elements to attract and retain the visitor’s interest. So our process is first we test the image to get the attention (mixture of Feel and Know). Once we know which image works, we then focus on the copy (Think). Then what we do is create multiple different variants – Know, Feel, Think, or Feel, Know, Think until we find a winning combination. Then we remarket back to audiences who did not convert with the opposite approach as they perhaps process information differently and we did not appeal to them correctly,” explains Bain.

12. Implement your own code

Finally, Tarletta Williams at Black Dragon Marketing recommends taking the code into your own hands and not relying on Facebook analytics alone. Williams shares, “Make sure that you don’t only rely on Facebook’s analytics. Include tracking code on each of your sites to determine not only which ad got clicked on, but how often they converted when they got there. It’s possible to have a high click ad on Facebook with low conversions and a low click ad with high conversions, you’ll want to be aware of both.”

Testing, testing, 1, 2, 3!

No matter the goal you set out with when running your Facebook A/B test, you could be surprised with what you find out! Remember that no A/B has to be the same and it can be exciting to play around with the various elements to change. You never know what you’ll uncover.