Table of contents

PostgreSQL is one of the most popular open-source object-relational database management system (ORDBMS) with an emphasis on extensibility and standards-compliance. It’s already 20 years old! PostgreSQL, beginning with version 9.0, includes built-in binary replication, based on shipping the changes (write-ahead logs) to replica nodes asynchronously.

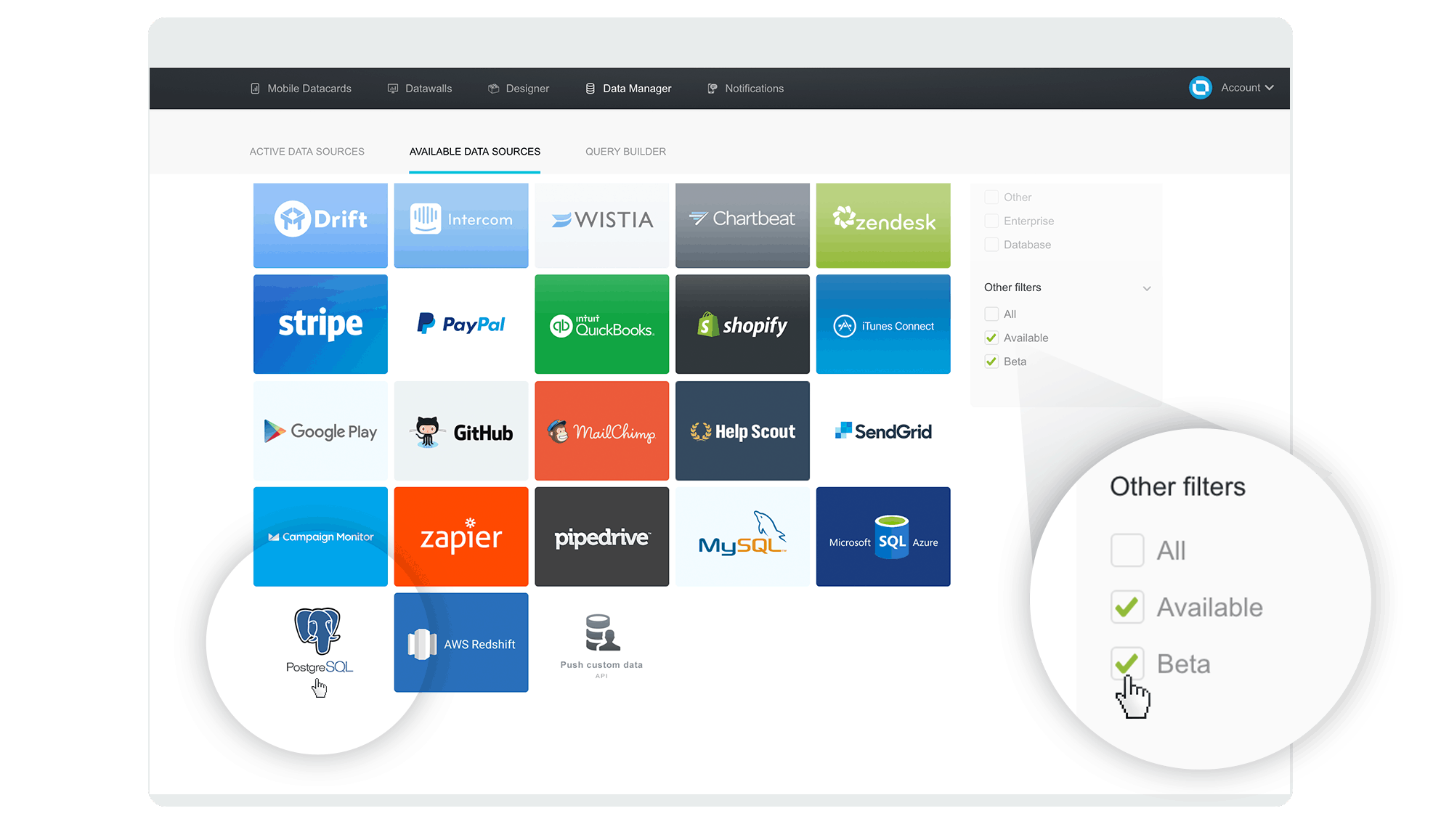

At the moment, MySQL, PostgreSQL, Microsoft Azure SQL and Amazon Redshift are supported out of the box on Databox. You have the data ready in your database, now it just needs to get visualized in an easy and concise manner so everyone – even your boss – can use it.

So, let’s make this happen!

What Will We Accomplish?

Firstly, we’ll setup a PostgreSQL server, then we’ll connect it to Databox and confirm that the connection is working. Lastly, we will create a Datacard visualizing the data. All this without a single line of code – except for the PostgreSQL query, of course 🙂

1. Prepare Your PostgreSQL Database and Host

To get started, you’ll need to allow remote connections from Databox’s public IP 52.4.198.118 to your database. The necessary steps to do that will depend on your database, server infrastructure and firewall. Our IP must be able to connect to the database port directly.

For our purposes, let’s assume your PostgreSQL server is up and running, reachable from internet addresses and accepting TCP/IP connections on some TCP port (5432 by default).

We will create a user named databox_ro with permissions to remotely access the database, aptly named mydb in our example:

CREATE USER databox_ro WITH PASSWORD 'secretPassword'; GRANT ALL ON DATABASE mydb to databox_ro; GRANT SELECT ON ALL TABLES IN SCHEMA public TO databox_ro; -- at least 9.0 GRANT SELECT ON ALL SEQUENCES IN SCHEMA public TO databox_ro; -- at least 9.0

Note: for the sake of this example, we have given this user a SELECT permission on all tables in our database. Permission could be given to select from one table only, or better yet: create a custom view and give permissions to select from this view only. But this is a bit beyond the scope of this document. A basic example on how to achieve this can be read here.

PostgreSQL up to v9.0 has a bit different syntax, so check the docs.

Configure PostgreSQL server to listen on all IPs, as by default it listens on local interface only. Open /etc/postgresql/9.5/main/postgresql.conf (default for Ubuntu and Debian) and check that it contains:

listen_addresses = '*' # Listen on all host IP addresses available port = 5432 # Listen on this port, default is 5432

If you’ve made any changes, restart the PostgreSQL server and it’s ready to accept remote connections.

service postgresql restart

Add created user access from Databox IP to our newly created database. Add the following to pg_hba.conf and reload the database:

host mydb databox_ro 52.4.198.118 255.255.255.255 md5

Port 5432/TCP, which is PostgreSQL default port, should be accessible from our VPC IP mentioned above. This must be done on your firewall, Linux iptables example:

iptables -A FORWARD -s 52.4.198.118/32 -p tcp --dport 5432 -j ACCEPT

Note: you might have to substitute FORWARD with INPUT if your Linux box has a public IP and the database runs on the host itself.

Your server should now be successfully set up to accept requests from our VPC IP to your chosen database/table, using a chosen user and password.

Load some sample data and make some queries in the local console:

sudo -u postgres psql

1.1. SSL Connection (server side setup)

With SSL support compiled in, the PostgreSQL server can be started with SSL enabled by setting the parameter ssl to on in postgresql.conf. The server will listen for both normal and SSL connections on the same TCP port, and will negotiate with any connecting client on whether to use SSL.

2. Connect Your Database to Databox

With our database ready, the next logical step is to connect it and test that it’s returning the data we want.

- Log in to the Databox web application and click on the ‘Data Manager’ tab,

- Go to Available data sources option and find the PostgreSQL tile. At the time of this writing, PostgreSQL is still in beta. You will need to check the Beta checkbox on the bottom right to see it,

- Hover over it with your mouse and click the ‘Connect’ button that slides up into view

- Enter your connection data in the popup and click the ‘Activate’ button. Default port 5432 is fine in most cases.

- If all went well, the popup will close shortly and you’ll get a “connected!” message.

Great! We have just successfully connected our database to Databox. In the next step, we’ll write a custom query that will regularly fetch data from your database and make it available for use in any Datacard.

Troubleshooting: If you get a “wrong credentials” message, double-check your user data. If you’re stuck on ‘Activate’ for a minute or so, it’s probably having issues connecting to your database host due to firewall / server / networking issues.

2.1. SSL Setup

Just check the SSL checkbox in New connection dialog and try connecting. If everything is setup OK with server, client should connect via SSL encrypted connection.

3. Visualize Your Data with Databox Designer

Now that the database is connected, we will use the Designer to query, shape and display the data in a format that’s most appropriate and useful for our needs:

- Choose an existing Datacard or create a new one (how?)

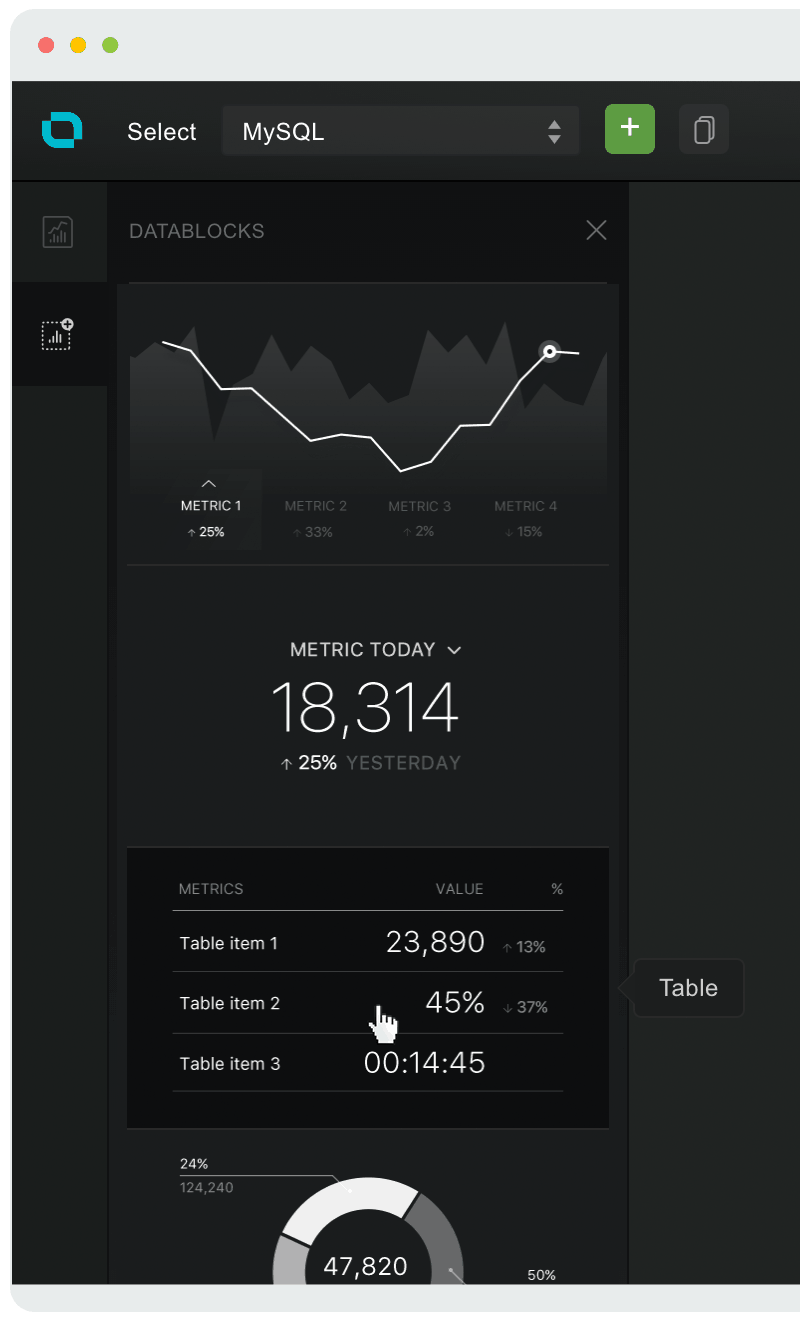

- Choose Datablocks icon on the left

- Drag & drop the Table block onto your Datacard

- For our example, where we will have a dynamic table (the pushed metric key has attributes/lines), we will switch to gather data from ‘Single metric’ in the properties panel on the right

- Select your newly created PostgreSQL data source from the Datasource dropdown on the right

- Click on the Metric dropdown below Datasource and choose ‘Custom Metric from ‘Metric Builder’

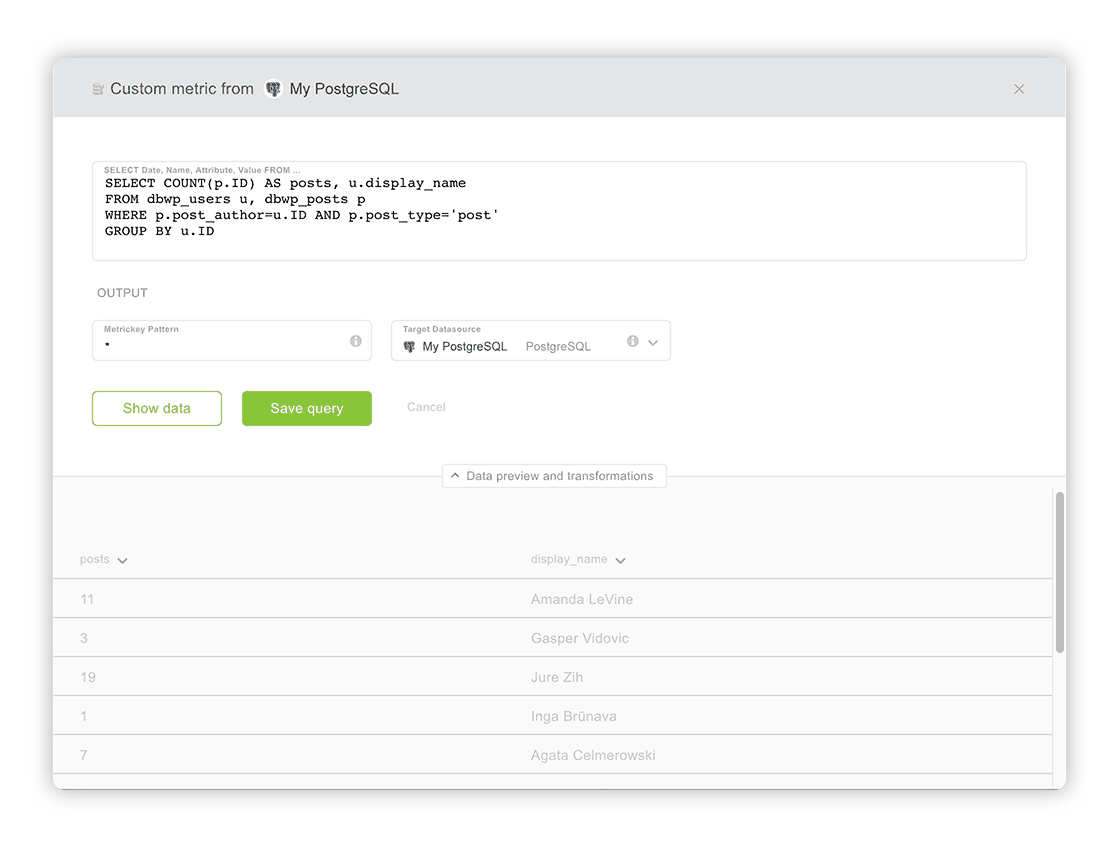

- Write your SQL query in a popup window that appears. For this example, we will connect to our PostgreSQL with a basic query that returns a list of WordPress authors with their post count:

SELECT COUNT(p.ID) AS posts, u.display_name, p.date AS date FROM dbwp_users u, dbwp_posts p WHERE p.post_author = u.ID AND p.post_type = 'post' GROUP BY u.ID

- Now click on ‘Show Data,’ below. Your query result should now be displayed at the bottom, similar to this, depending on your data, of course:

- (optional) You can rename each column (which will become a metric key in Databox), by clicking on the arrow beside it and typing in a new name.

- (optional) You can enter a different metric key name pattern or just leaving the asterisk (*), which will create a metric key with the same name as pushed. By default the output (target datasource, where the data gets pushed to), is already selected and is the same as your source data connection. You can use other tokens if needed.

- Once you are satisfied with the data you see, just click ‘Save Query.’

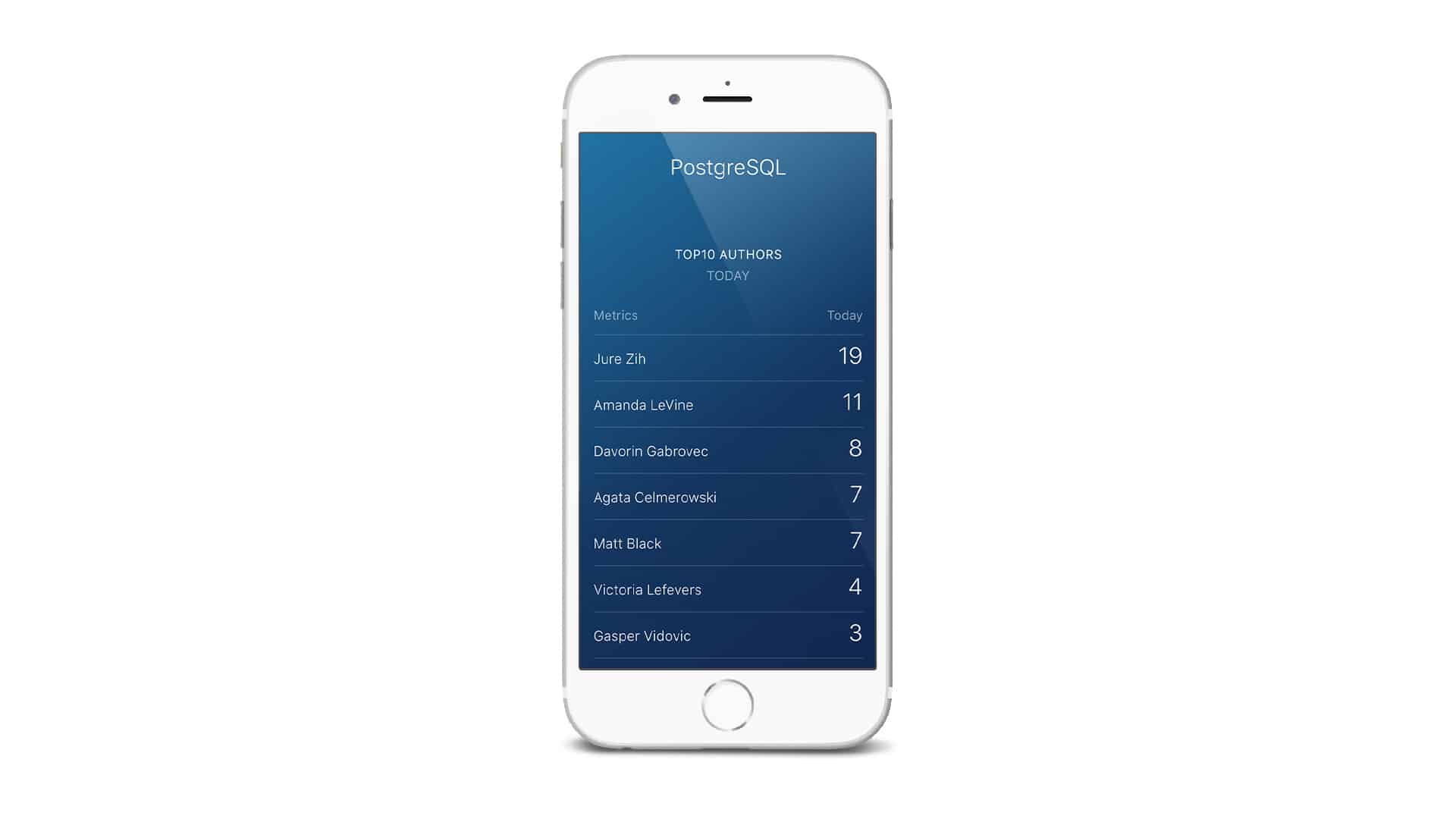

- Tada! After you saved your custom query, you should see the data on the table. If not, check if the right data source and metric are selected. In our example it’s the ‘My PostgreSQL’ data source and ‘└ posts|name’ metric, because we’re pushing posts by names. The time interval should be set to ‘Today,’ to see the latest data.

We have just written a custom SQL query and displayed its results. Databox will continuously, each hour, fetch data from this resource and store it in the selected target data source (in our example ‘My PostgreSQL’).

Writing Queries Basics

Each query must contain a date column containing a valid date, named date. Let’s take a following SQL query for example:

SELECT salary_date AS date, salary FROM employees

In table employees we have a date column named salary_date. As Databox expects column with a name date, we select our salary_date column as date.

Salary is another column, containing a number, column name will be pushed as metric key named salary. This query is valid and can be pushed to Databox.

Troubleshooting: If you don’t see any data, double-check your SQL query, try it directly on your database. If it’s not displaying results there, you have an error somewhere in your query. Also check that PostgreSQL user has necessary permissions to access the database from Databox IP.

Well done! Your database is now connected to Databox, queries can be executed and then displayed on your mobile / big screen / computer.

Go ahead and explore further. Add more queries, add blocks, explore different types of visualizations. Make that perfect Datacard (or Datawall of course) you always needed but didn’t know how to get. Now you can! Clean and professional, right at your fingertips. Only data that matters, without clutter. The possibilities are truly endless.

Ready to try it for yourself? Signup for free today and let us know how it went for you.

Remember: we’re always glad to help if you run into any obstacles!